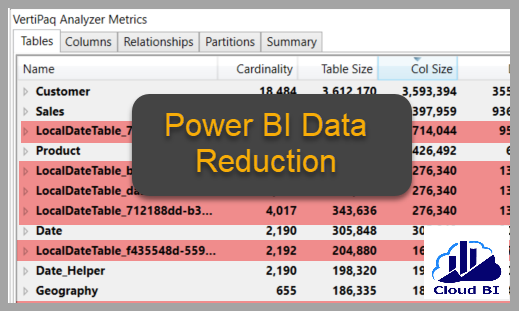

Power BI VertiPaq engine uses a high compression algorithm based on Columnstore structure, enabling it to load the data into the data model with significant size reduction compared to the original data size. But this doesn’t mean that the developers and data modeller to be careless about the size of the model and don’t follow the best practice to minimise memory consumption. We should never forget the size limitation of the dataset in Power BI Service and the low query performance of the large dataset.

In this article, I show some tips and considerations to reduce the size of the Power BI dataset.

1- Delete any column which is not needed in the reports

There should always be some columns in the tables that don’t have any analytics value, or no one needs them in the reports. Find them in the tables and delete them in Power Query, or exclude them from source data if applicable, for example, when the data source is a database view.

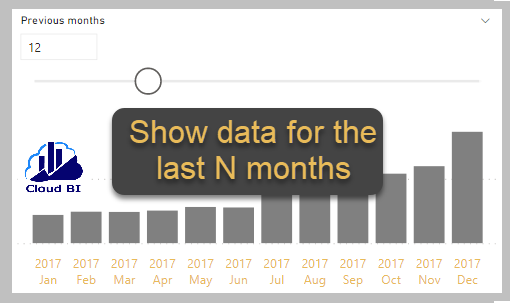

2- Remove the unneeded rows

Filter rows in Power Query or from the underlying data source if only you require a subset of rows in the reports.

3- Turn Auto date time off

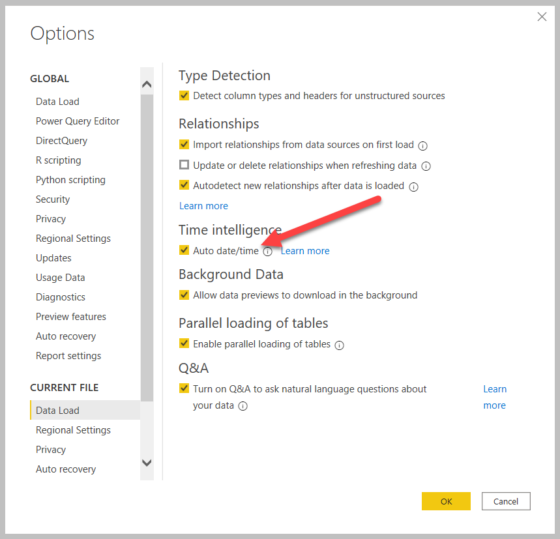

In Power BI Desktop, Auto date/time is enabled by default, creating a date hierarchy for each Date and DateTime column in the model. In the background, the Power BI engine makes a temporary table for each column, taking considerable memory if there are too many date columns in the model. Disabling this option and using a Date table instead will save this memory usage.

4- Manage DateTime columns

Datetime columns can consume a lot of memory, especially when they come in the fact tables. DateTime can keep time value up to a fraction of a millisecond, and it brings high cardinality to the VertiPaq engine and consumes large memory for Data and Dictionary storage. Therefore, the best practice is to convert DateTime to date or split them into two columns if you need to use time value in the reports.

5- Convert Decimal Number to Fixed Decimal Number

Decimal Numbers can represent the numbers with precision up to 15 digits which is inefficient when data has excessive accuracy.

By changing DataType to Fixed Decimal Number, the floating part of the number would be rounded to 4 digits which brings the cardinality down and takes less memory.

6- Convert Text columns to Numeric

One step of storing data in the VertiPaq engine is to encode the non-numeric columns by assigning a numeric identifier to each unique value in the column. In some specific instances, such as sales order number or invoice number, we can convert the Text columns to numeric, which can significantly reduce the memory usage by the column. We better change the Default Summarization property to “Do Not Summarize” for these columns to avoid inappropriate summarization.

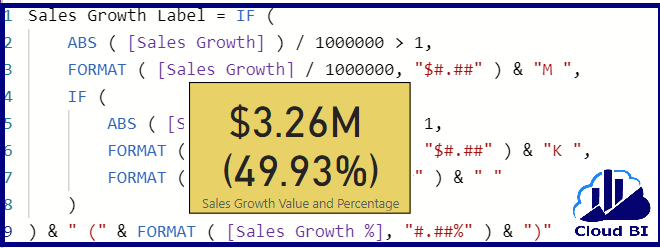

7- Preference creating the new columns in Power Query by M instead of using calculated column by DAX

The VertiPaq engine stores calculated columns (defined in DAX) just like regular columns. However, the data structures are stored slightly differently and typically achieve less efficient compression. The recommendation here is to create the new columns in Power Query or even in the data source if possible.

8- Disable load in Power Query

Should not load those tables and queries intended to be used only for supporting the other queries and are not required in the reports into the model. You can disable load for them in Power Query.

9- Use the right granularity for the fac tables

Summarising the fact tables can massively reduce the number of rows and minimise memory usage. For example, a Sales table with Order Numbers and Line Items with millions of records takes massive storage. However, if there were no analysis requirements for the sales detail in the reports, we can use a summarised table by grouping records by day(or even week or month), product, customer, and other required dimension’s keys to have a much smaller table.

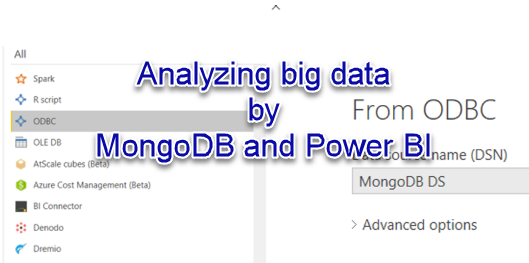

10- Use Composite mode and Aggregation

Power BI Composite mode and Aggregations are incredible methods to balance memory usage and query performance. In this way, we can use both Import and Direct Query modes in one model and direct the reports to use the aggregated values from the memory and detail by sending queries to the underlying data sources. You can read more about Power BI Composite mode in my other blog here.

In addition to the above points, always spend time reviewing the storage used in your Power BI file before deploying it to the service. You can do this easily by DAX Studio, which is an external tool for Power BI.

Summary

Power BI highly compresses the data when loading them into the model, allowing us to load tables with millions of records quickly. But as there is a size limitation in the Power BI service, we should be mindful about loading the data and follow the best practice in data modelling. I this post, we went through some principles and techniques to efficiently minimize the size of Power BI datasets.